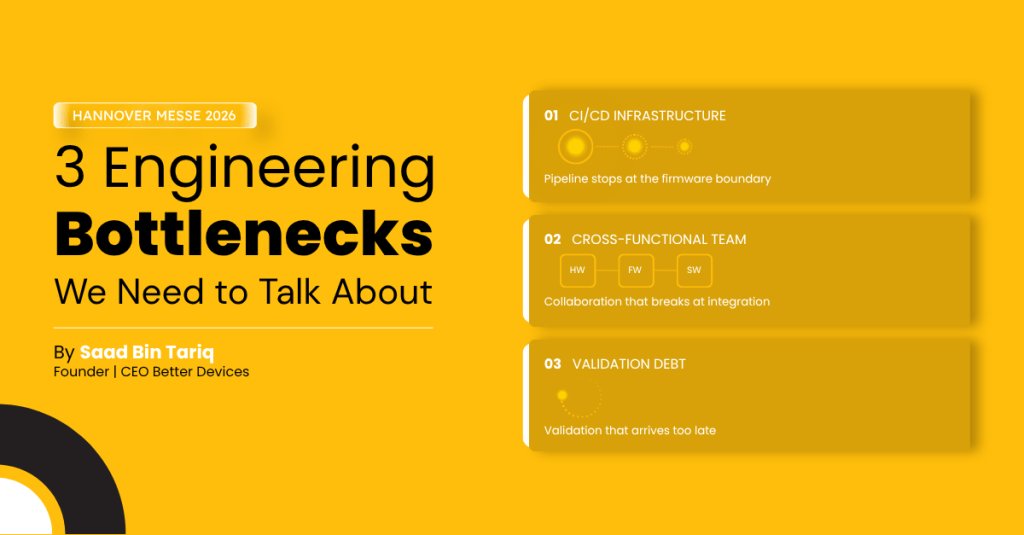

Every year, Hannover Messe fills its halls with the latest hardware innovations. But behind the polished demos, most engineering teams are quietly fighting the same three battles. We are.

Hannover Messe is the one time of year when the hardware industry exhales. Product leads, VPs of Engineering, and CTOs converge not just to see what’s new but to quietly compare notes on what’s still broken.

I’ve had hundreds of those conversations over the years. And year after year, three bottlenecks come up in almost every one of them. They aren’t glamorous. They don’t have a flashy booth. But they are slowing teams down, compounding rework, and narrowing the window to ship.

Here’s what I think the industry needs to stop dancing around and start fixing.

Bottleneck 1: CI/CD infrastructure that stops at the firmware boundary

Ask any embedded engineering team whether they have CI/CD and most will say yes. Ask them whether it actually runs on hardware not a simulator, not a virtual target and the room gets quiet.

The software world solved continuous integration a decade ago. You push a commit, a pipeline runs, tests execute, and you know within minutes whether you broke something. Embedded systems never got that memo. Most teams are still manually flashing devices, running validation by hand, and treating HIL (hardware-in-the-loop) testing as a once-per-sprint ritual rather than a continuous gate.

“The industry is dealing with a double standard: software teams get instant feedback loops, hardware teams wait days. That gap is not inevitable, it’s an infrastructure problem.”

The consequence isn’t just slower development. It’s that bugs survive to late-stage validation or worse, to the field because the feedback loop is too long to catch them early. When a hardware defect surfaces two months before launch, you don’t fix it quickly. You delay, rework, or ship risk.

What fixing this actually looks like

It means building a CI/CD pipeline where a commit to firmware triggers an automated test run on real target hardware, not a simulator. Where HIL rigs are integrated into the same toolchain as your software tests. Where a failing hardware test blocks a merge, not a release meeting.

This is not science fiction. We’ve helped a team catch embedded system regression in hours instead of weeks. The investment is real but it’s measured against the cost of one delayed launch, not the cost of a fancy CI server.

Bottleneck 2: Cross-functional teams that only collaborate on paper

The org chart says Hardware, Firmware, and Software work together. The Gantt chart says they’re in sync. Then you get to integration week and discover that the firmware team built to a hardware interface that changed three sprints ago, and the software team’s driver assumptions are three revisions out of date.

This is not a people problem. It is a systems problem. And it is endemic to hardware development.

Software product development solved cross-functional collaboration mostly by moving to short sprints, shared backlogs, and continuous integration. Hardware teams tried to copy that playbook and hit a wall, because hardware has lead times, tooling constraints, and physical dependencies that software doesn’t. You can’t spin up a new PCB the way you spin up a new microservice.

“Agile was not designed for atoms. But that doesn’t mean hardware teams are doomed to waterfall. It means they need a different version of the same principles.”

The gap shows up in predictable ways. Hardware gets frozen before firmware is validated against it. Firmware APIs get locked before the software team understands what they need. Mechanical constraints emerge late. Test environments don’t match production configurations. Each of these is a coordination failure masquerading as a technical problem.

The interventions that actually work

Not daily standups, everyone already has those. What actually works is a single model-based reference implementation that ties together the product spec across various domains hardware, firmware, and software all working from the same source of truth, so when one discipline changes a shared assumption, every other discipline sees it immediately rather than discovering it weeks later at integration.

It also means synchronised increments. Hardware, firmware, and software should be building to the same testable milestone at the same time not sequentially. This requires more planning upfront, but it replaces the scramble at integration with predictable, contained reviews.

Bottleneck 3: Validation debt that ships with the product

There is a version of every embedded product that works perfectly in the lab. It performs beautifully under nominal conditions. It passes every test the team designed for it.

Then it goes into the field. And it meets real power rails. Real RF interference. Real temperature extremes. Real users doing things no one anticipated. And it fails in ways the lab never predicted.

This is not bad luck. It is a validation of the gap between how thoroughly a system was actually tested and how thoroughly it needed to be tested before it was trusted with real-world conditions. Most teams incur this debt not out of negligence, but because validation is treated as a phase rather than a continuous practice.

“Teams that validate continuously, not just at milestones don’t eliminate failures. They shrink the distance between a failure and its discovery. In hardware, that distance is everything.”

The worst version of this plays out post-launch. A field failure in a medical device, an industrial controller, or a consumer product doesn’t just cost money. It costs trust, regulatory standing, and sometimes a product line. The teams who end up in that position almost never skipped testing on purpose. They just deferred it incrementally, sprint by sprint until deferral became the default.

Continuous validation as a discipline

The shift is conceptual before it’s technical. Validation has to move from a gate at the end of development to a thread running through it. Every sprint should produce a testable artifact. Every interface should have a test. Every regression should surface before it compounds.

Technically, this means HIL environments that reflect real operating conditions not ideal ones. It means test coverage that includes edge cases, not just happy paths. And it means treating validation infrastructure as a product in its own right: something that gets maintained, extended, and owned.

Let’s have this conversation at Hannover Messe

I’ll be at Hannover Messe this year specifically to have these conversations not to demo a product or hand out brochures, but to talk through what your team is actually running into and share what’s worked for us.

If any of this resonates whether you’re dealing with a CI/CD gap, a cross-functional coordination problem, or a validation debt that’s starting to feel expensive I’d like to meet.

If any of this resonates, reach out before the show to book time with me directly. Not a pitch — a working conversation, engineer to engineer.