If you’ve just been handed a working PoC and told to “take it to production,” this article is for you.

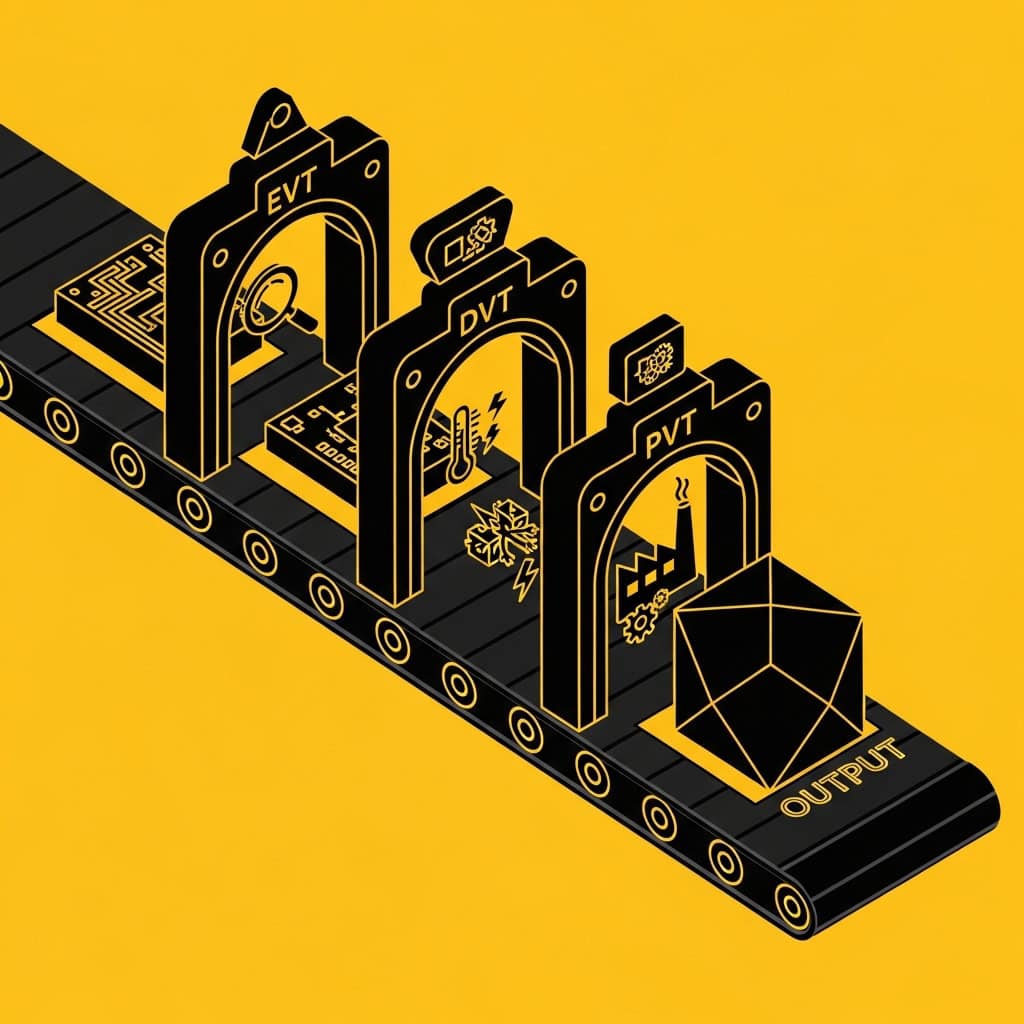

The hardware development lifecycle from Proof of Concept through Engineering Validation Testing, Design Validation Testing, Production Validation Testing, and into Mass Production is a well-established framework. But in our experience working across industrial IoT, consumer electronics, and automotive embedded systems, it’s also one of the most misunderstood. Teams enter each stage without a clear picture of what it’s for, what it needs to prove, and critically what decisions made in the previous stage are going to come back and hurt them.

This guide breaks down each stage in plain terms: what it is, what it’s actually testing, what you need to have completed before it starts, and the specific failure patterns we see most often at each transition.

It’s written for hardware engineering leads, CTOs at product companies, and product managers who are responsible for a device program and want to understand how the journey from PoC to the manufacturing floor actually works.

The Lifecycle at a Glance

Before we go deep on each stage, here’s the full picture:

| Stage | Full Name | Primary Question |

| PoC | Proof of Concept | Does the core technology work at all? |

| EVT | Engineering Validation Testing | Does our design implementation work as intended? |

| DVT | Design Validation Testing | Does it work reliably, across all conditions, at scale? |

| PVT | Production Validation Testing | Does the manufacturing process produce devices that meet spec? |

| MP | Mass Production | Can we produce it at volume, consistently? |

Each stage has a different question, a different owner, and a different set of criteria for what “done” means. Running them in the wrong sequence, skipping one, or failing to be clear about what each stage is for is how programs end up in expensive re-spins.

PoC Proof of Concept

What It’s For

The PoC stage exists to answer one question: is the core technology capable of doing what the product needs it to do?

Not “does the product work.” Not “is it manufacturable.” Not “does it meet compliance.” Just: is the underlying technical approach feasible?

This distinction matters because it defines what a successful PoC looks like and the most common PoC failure we see is a team that answers a different question than the one they needed to answer.

What It Should Prove

A PoC should validate the critical technical assumptions your product is built on. If your product is a wireless sensor that needs to achieve a specific measurement accuracy at a specific battery life, those two things need to be measured and confirmed at PoC, under representative conditions. Not estimated. Not assumed. Measured.

The specific claims a PoC needs to substantiate will vary by product, but the principle is consistent: identify the technical assumptions that, if wrong, would require you to fundamentally rethink the product architecture. Then test those assumptions directly, before anything else.

What a Good PoC Produces

Beyond the working hardware, a PoC should produce:

- Measured performance data against clearly defined acceptance criteria

- Architecture documentation — block diagrams, interface specifications, firmware module structure — that downstream teams can build on

- A risk register identifying what was validated and what wasn’t, and what the open technical risks are for the EVT phase

- A parallel development foundation — enough documented structure that firmware, app, and backend teams can start building without waiting for EVT hardware

That last point is frequently missed. One of the highest-value things a PoC can do is remove hardware from the critical path of every other workstream. If your app team and backend team are waiting for stable EVT hardware before they can start real development, your PoC didn’t do its job.

The PoC Decisions That Cause DVT Re-Spins

Here’s a pattern we see regularly: a PoC gets built on development boards, proves the concept works, and gets handed to an EVT team. The EVT team designs a custom PCB. The custom PCB has a different power topology, a different RF front-end layout, a different firmware platform. Suddenly, none of the PoC’s performance measurements are valid anymore. The EVT team is starting from scratch — and they’re supposed to be validating a design, not investigating basic feasibility.

The specific decisions at PoC that cause this:

Component selection without EVT migration in mind. Using a development module at PoC is fine — but you should already know which production-ready component it’s going to be replaced with in EVT, and you should have sanity-checked that the performance will be equivalent.

Firmware architecture that was never meant to scale. PoC firmware written as quick demonstration code is fine — but the firmware module boundaries should be defined at PoC even if the internals are rough. Rewriting module boundaries in EVT is expensive.

No power measurement. Power is frequently the thing that kills DVT. It’s almost always measurable at PoC. Not measuring it is a deferred risk that becomes an EVT crisis.

Undocumented architecture decisions. The engineer who decided to use a particular protocol, a particular component, a particular partitioning approach — if they don’t document why, the EVT team will make those decisions again. Sometimes differently.

EVT — Engineering Validation Testing

What It’s For

EVT is the first build that looks like the real product. Not development boards lashed together — an actual PCB designed to the target architecture, running firmware that’s written to production standards, in an enclosure that approximates the final form factor.

The question EVT is answering is: does our design implementation work as intended?

Note what this question is not asking. It’s not asking whether the design is reliable across all conditions — that’s DVT. It’s not asking whether it can be manufactured consistently — that’s PVT. EVT is asking: does it work at all? Does the hardware do what the schematic says it should? Does the firmware run without crashing? Does the system integrate as the architecture says it should?

What EVT Needs From the PoC

EVT needs the following inputs to run cleanly:

- A documented architecture — hardware block diagrams, interface specifications, firmware module boundaries — so the EVT design is building on a defined foundation, not guessing

- Measured PoC performance data — so the EVT team knows what numbers they’re targeting, not just whether it “works”

- A defined EVT scope — a list of what the PoC validated and what EVT needs to prove for the first time

Without these, EVT becomes a continuation of PoC — and it costs significantly more per iteration than PoC does, because the hardware is more complex and the iterations take longer.

What EVT Produces

A successful EVT exit produces:

- A hardware design that works — all functional requirements met at nominal conditions

- A validated firmware foundation — stable, structured, and documented to the level that the DVT team can extend it

- An identified and resolved issue list — a complete log of the issues found during EVT, their root cause, and the design changes made to address them

- A DVT scope — what still needs to be proven: reliability, environmental performance, compliance pre-checks

Common EVT Failure Patterns

Too many open items at EVT entry. EVT hardware is expensive to iterate. Every issue that should have been caught at PoC and wasn’t will cost more to fix in EVT. Teams that rush the PoC phase to get to EVT faster almost always spend longer in EVT overall.

Treating EVT as a PoC continuation. Some teams use EVT to continue investigating basic feasibility questions. If you’re still figuring out whether the sensor technology works during EVT, something went wrong at PoC.

Not defining the EVT exit criteria upfront. Without defined exit criteria, EVT never clearly ends. Teams add scope, add test cases, add “one more thing to check” — and the program slips. EVT exit criteria should be written before EVT starts, reviewed with stakeholders, and treated as the actual exit bar.

DVT — Design Validation Testing

What It’s For

DVT is where the design gets stress-tested. The question is no longer “does it work at nominal conditions” — it’s “does it work reliably, across the full operating range, across units built to the expected manufacturing variability, in the presence of the environmental conditions it will face in the field?”

This is a fundamentally different question from EVT. A design that passes EVT with flying colours can still fail DVT. The RF performance that looked great at room temperature on the bench might fall apart at the temperature extremes of the operating specification. The firmware stability that was fine on a single unit might degrade when running for 72 hours straight. The mechanical design that fit together perfectly on the first three prototypes might bind on unit four due to tolerance stack-up.

DVT is also where compliance pre-qualification happens. EMC, CE, FCC — these aren’t things you discover at DVT, but they are things you need to verify at DVT before committing to the compliance testing itself. An EMC failure discovered at formal testing after DVT is a re-spin. An EMC pre-qualification failure discovered during DVT is a design change.

What DVT Needs From EVT

DVT needs a stable design. Not a perfect design — DVT exists to find and fix the remaining issues — but a design where the fundamental architecture is settled. If the EVT team is still making architectural changes late in the EVT phase, DVT will be chaotic.

DVT also needs a test plan: the specific test cases, the environmental conditions, the acceptance criteria, and the compliance pre-qualification checklist. This should be written during EVT, not at the start of DVT.

What DVT Produces

- A validated design — proven to meet specification across the full operating envelope

- Compliance pre-qualification results — and any design changes required before formal certification

- A manufacturing input specification — the design is stable enough to share with manufacturing partners for DFM review

- A PVT scope — what needs to be validated once the first production-line units are built

Common DVT Failure Patterns

Starting DVT with an unstable design. If significant architectural changes are still being made at DVT entry, the test results will be unreliable — because you’re not testing a fixed design. The discipline of holding EVT exit criteria and not entering DVT until they’re met is one of the most important program management decisions a hardware program makes.

Discovering compliance issues for the first time at DVT. Compliance requirements should be identified at EVT, pre-qualified during DVT, and submitted for formal testing before PVT. Teams that defer compliance thinking to DVT almost always find issues that require re-spins.

Ignoring production variability. DVT units should be built with production-representative processes wherever possible — not just by hand in a lab. A design that passes DVT when built by an experienced engineer may fail PVT when built by a production line. DFM review with your manufacturing partner should happen during DVT, not after it.

PVT — Production Validation Testing

What It’s For

PVT is the first time your device is built on the actual production line, with production tooling, production processes, and production workers. The question PVT is answering is: does the manufacturing process produce devices that meet specification, consistently?

This is a completely different validation domain from DVT. DVT validated the design. PVT validates the manufacturing process. A design that performs perfectly when built in a lab can fail PVT if the production process introduces variability that the design doesn’t tolerate — solder joint quality, component placement accuracy, reflow profile sensitivity, test fixture compliance.

PVT is also where end-of-line test procedures get finalised and verified. The test fixtures, the test scripts, the pass/fail criteria — all of these need to be proven on PVT units before volume production commitments are made.

What PVT Needs From DVT

PVT needs a locked design. No design changes after PVT starts — any design change at PVT or later is a re-spin that resets the production timeline and potentially requires re-qualification.

PVT also needs a manufacturing partner who has been involved in DFM review during DVT. If your manufacturing partner is seeing the design for the first time at PVT, you’ve left a significant amount of production risk unaddressed.

What PVT Produces

- Production sign-off — confirmation that the manufacturing process produces devices within specification

- Yield data — the percentage of units passing end-of-line test, and the root cause of any failures

- Locked end-of-line test procedures — documented, validated, and ready for volume production

- A compliance certification package — formal compliance testing should be completed on PVT units, not earlier

Common PVT Failure Patterns

First-time manufacturing partner engagement at PVT. If DFM review didn’t happen during DVT, PVT will surface manufacturing issues that should have been designed out. These require design changes, which means a re-spin, which means PVT starts again.

Inadequate end-of-line test coverage. End-of-line testing exists to catch production defects before devices ship. Under-specified EOL test procedures — testing too few parameters, with too-loose tolerances — let defective units through. This is a field reliability problem waiting to happen.

Treating PVT yield as a binary pass/fail. PVT yield below target is a diagnostic opportunity, not just a failure. Every failing unit tells you something about the production process or the design’s tolerance to production variability. The data from PVT failure analysis is what enables yield improvement before MP.

MP — Mass Production

What It’s For

Mass Production is not a validation phase — it’s an execution phase. The design is locked. The process is proven. The test procedures are validated. MP is about producing devices at volume, consistently, within cost.

But MP is not where engineering responsibility ends. Field issues will occur. Production yield will fluctuate. Components will go end-of-life. The engineering infrastructure you built throughout the development lifecycle — particularly the test infrastructure and the field telemetry — is what determines how efficiently you manage the product once it’s in the field.

What Ongoing MP Engineering Looks Like

Production monitoring. End-of-line yield data should be tracked, trended, and reviewed regularly. A yield decline is an early warning of a process or component issue that’s better caught early.

Field telemetry. The data acquisition pipelines built into the device firmware during development are the primary tool for managing field issues post-launch. Products that don’t have robust telemetry infrastructure are flying blind in the field.

Component lifecycle management. Electronic components go end-of-life. The earlier you identify and qualify replacements, the less disruptive the transition. Active component lifecycle management is a maintenance engineering discipline that too many teams neglect until a component is already obsolete.

Systematic field RCA. When field issues occur — and they will — the response process matters enormously. A structured Root Cause Analysis methodology, combined with the test infrastructure built during development, is what distinguishes a team that resolves issues quickly and permanently from one that patches symptoms and sees the same issue recur.

The Transitions That Kill Programs

Looking across the lifecycle, the stage transitions are where most program failures originate. Here’s the pattern we see most often:

PoC → EVT: Insufficient documentation and performance validation at PoC means the EVT team is discovering basic feasibility issues rather than validating a design. EVT becomes a PoC re-run at higher cost.

EVT → DVT: Premature DVT entry with an unstable design means test results are unreliable and design changes during DVT contaminate the test data. The program oscillates between EVT and DVT without clearly exiting either.

DVT → PVT: Late or absent DFM review means the first production run surfaces manufacturability issues that require design changes — restarting PVT and potentially re-triggering compliance testing.

PVT → MP: Undertested end-of-line procedures and insufficient field telemetry infrastructure mean field issues are slow to detect and difficult to diagnose.

The common thread in all of these is insufficient preparation at the end of each stage for the demands of the next one. Each stage needs to produce specific outputs — not just a working device, but the documented foundation that the next phase can build on cleanly.

A Note on Team Continuity

One thing that consistently makes a positive difference across the lifecycle is team continuity. The engineers who built the PoC understand the architecture decisions that were made and why. The engineers who ran EVT understand the issues that were found and how they were resolved. That knowledge doesn’t transfer completely through documentation — some of it lives in the heads of the people who did the work.

Programs that change engineering teams at each stage transition pay a knowledge transfer tax at every handoff. The decisions that weren’t documented, the assumptions that weren’t written down, the “we tried that and it didn’t work because…” — all of this gets lost, and some of it gets rediscovered expensively.

This is one of the strongest arguments for a single engineering partner across the full lifecycle. Not because different teams can’t do good work — they can — but because the overhead of the transitions is real, and it compounds.

Summary: What Each Stage Is Actually Testing

| Stage | Question Being Answered | Primary Risk If Skipped or Rushed |

| PoC | Is the core technology feasible? | Wrong architecture enters EVT — expensive re-spin |

| EVT | Does the design implementation work? | Unstable design enters DVT — test results unreliable |

| DVT | Does it work reliably at scale? | Compliance issues and production failures at PVT |

| PVT | Does the manufacturing process work? | Field defects and yield problems at MP |

| MP | Can we produce it consistently? | Field issues managed reactively rather than systematically |

About Better Devices

Better Devices is a Berlin-based embedded hardware engineering consultancy. We work with startups, scale-ups, and enterprise product teams across the full device lifecycle — from feasibility and PoC through to mass production and field engineering. If your program is navigating any of the transitions described in this article and you want an experienced engineering team in your corner, get in touch with our experts.